What if a 14-year-old solved a medical problem that billion-dollar companies haven’t?

Meet EYEVA™ — the AI wearable that detects eye drift in real time and instantly alerts the user, built by Aaryan Balani, age 14, for a fraction of the cost of existing solutions.

Intermittent strabismus affects millions of people worldwide. When the eye drifts, the person rarely knows it’s happening — and commercial detection devices cost $15,000–$30,000, putting them out of reach for most patients.

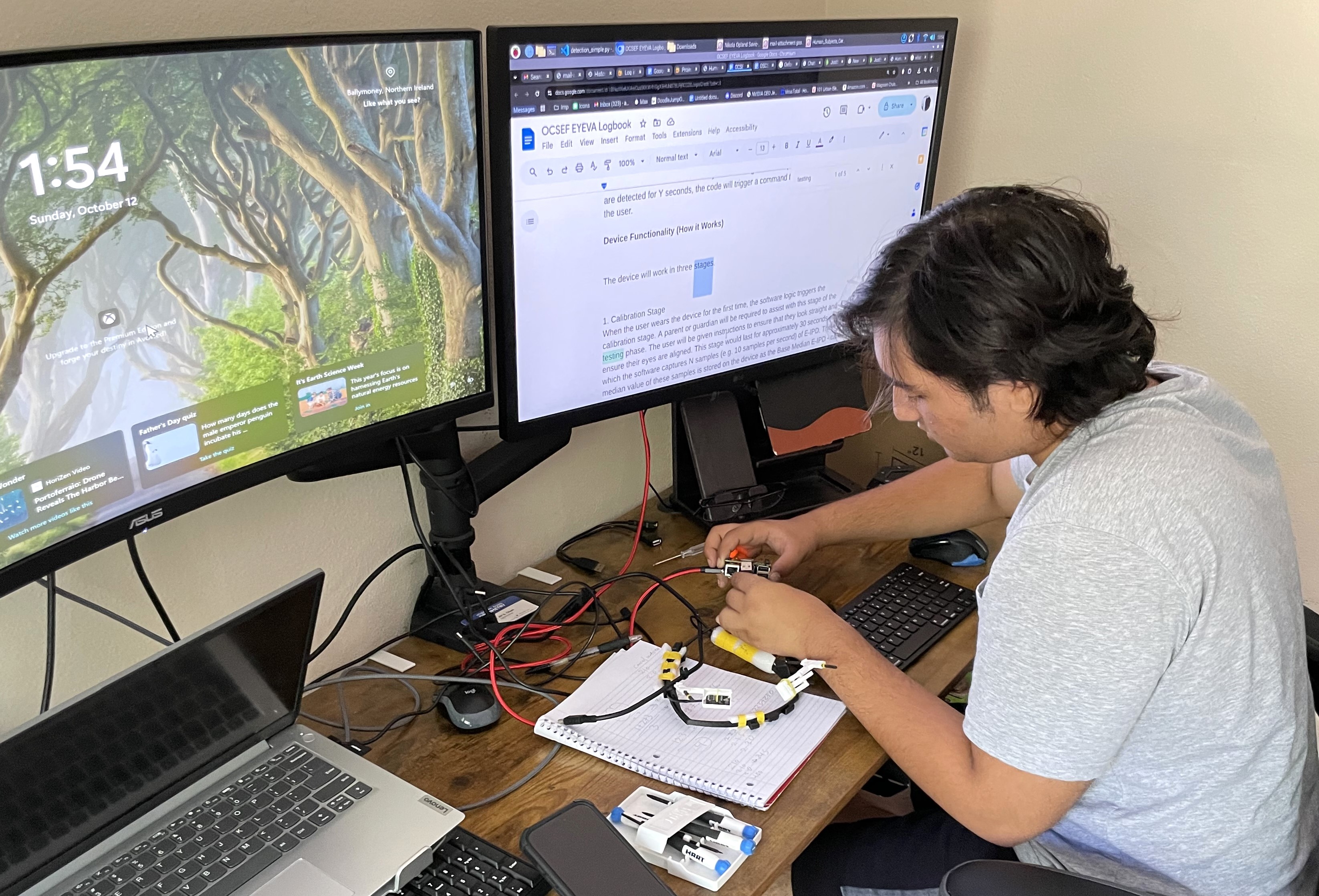

Aaryan Balani, an 8th grader from Cerritos, California, spent a year teaching himself machine learning and building a solution. EYEVA™ is a custom-trained AI wearable headband that detects eye drift in real time and alerts the user instantly. It cost $345 to build.

Built by a 14-Year-Old

Aaryan Balani, 8th grade, Cerritos CA

Who is Aaryan?

2026

The Problem. The Gap. The Solution.

The Problem

Intermittent strabismus causes the eye to drift unpredictably. Most patients have no real-time awareness when it happens — making training and correction significantly harder. The condition affects an estimated 100 million people worldwide.

The Gap

Existing clinical eye-tracking devices cost $15,000–$30,000 and are confined to clinical settings. No affordable, portable, real-time alert device exists for daily use. Patients go undetected for hours, days, or longer.

The Solution

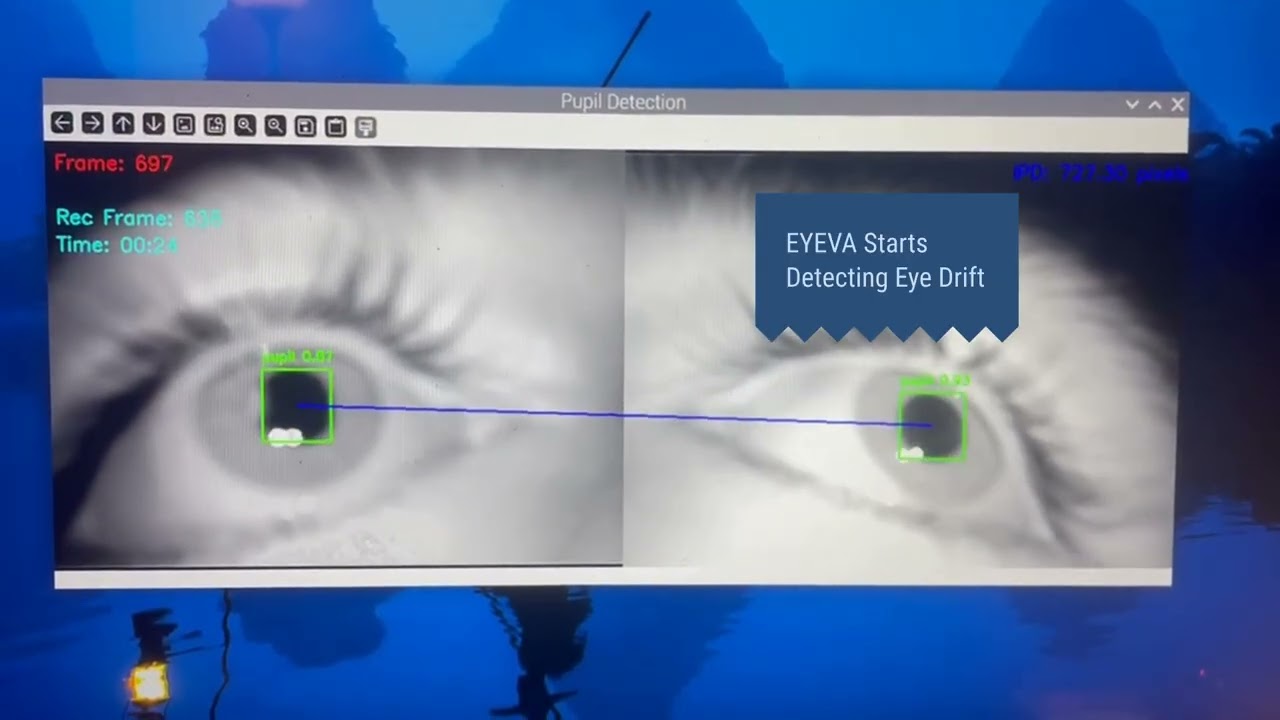

EYEVA™ uses a custom-trained YOLO v11 machine learning model running on a Raspberry Pi 5 with an AI accelerator and infrared cameras to detect drift events in real time and deliver an immediate biofeedback alert.

Technical Stack

EYEVA™ runs on a Raspberry Pi 5 with an AI accelerator, infrared cameras, and a custom-trained YOLOv11 model optimized for real-time ocular displacement detection.

Research Foundation

- [1]Hashemi, H., Pakzad, R., Heydarian, S., Yekta, A., Aghamirsalim, M., Shokrollahzadeh, F., Khoshhal, F., Pakbin, M., Ramin, S., & Khabazkhoob, M. (2019). Global and regional prevalence of strabismus: a comprehensive systematic review and meta-analysis. Strabismus, 27(2), 54–65. doi.org/10.1080/09273972.2019.1604773

- [2]Kedziora, L. (2019). Eye-tracker elements ready for workshops. www.lukaszkedziora.com/en/2019/02/22/eye-tracker-elements-ready-for-workshops/

- [3]Moon, H. S., Yoon, H. J., Park, S. W., Kim, C. Y., Jeong, M. S., Lim, S. M., Ryu, J. H., & Heo, H. (2021). Usefulness of virtual reality-based training to diagnose strabismus. doi.org/10.1038/s41598-021-85265-8

- [4]Orlosky, J. (2025). EyeTracker: A lightweight and robust Python eye tracker [Computer software]. github.com/JEOresearch/EyeTracker

- [5]Savic, N. O. (2024). Piloting virtual reality-enabled dichoptic therapy in primary optometric practice for anisometropic amblyopia in children age 9–16 years. http://www.usn.no

- [6]Thorisdottir, R. L., Malmsjö, M., Tenland, K., Blohmé, J., & Hesgaard, H. B. (2021). The Success of Unilateral Surgery for Constant and Intermittent Exotropia and Factors Affecting It in a Large Scandinavian Case Series. Journal of Pediatric Ophthalmology and Strabismus, 58(1), 34–41. doi.org/10.3928/01913913-20201007-04

- [7]Tonsen, M., Zhang, X., Sugano, Y., & Bulling, A. (2016). Labelled pupils in the wild: A dataset for studying pupil detection in unconstrained environments. Eye Tracking Research and Applications Symposium (ETRA). doi.org/10.1145/2857491.2857520

The case for EYEVA™ — in four numbers

That’s a 99% cost reduction compared to existing clinical solutions.

See EYEVA™ in Action

Watch Aaryan demonstrate EYEVA™ — from drift detection to real-time alert — in this demo filmed at his home lab in Cerritos, California.

The Inventor

I’m a 14-year-old 8th grader from Cerritos, California. I built EYEVA™ because the problem was personal. When I learned that no affordable real-time detection device existed, I decided to build one myself.

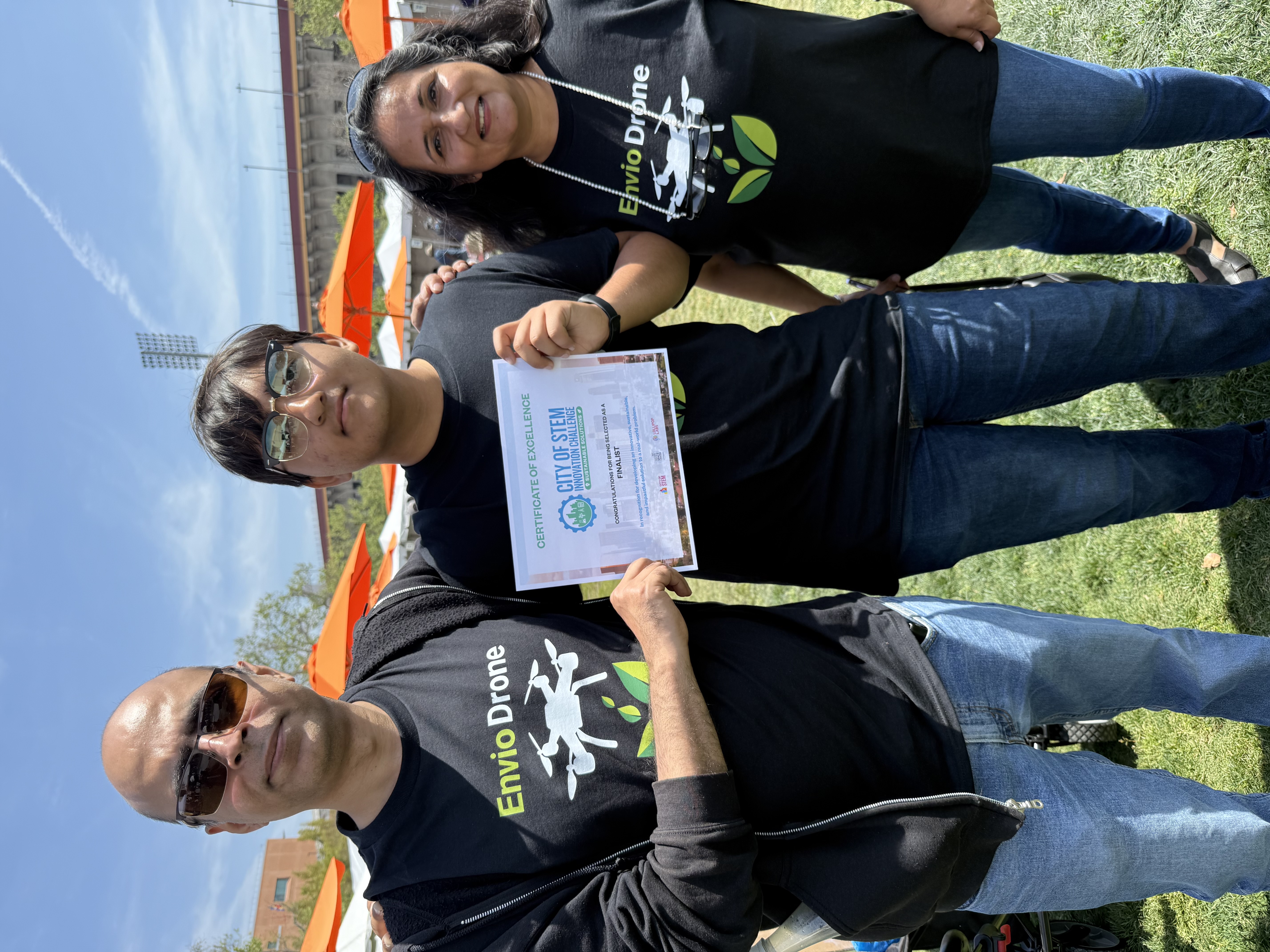

Over the course of one year, I taught myself machine learning, iterated through five hardware prototypes, conducted a 30-day pilot study, and filed a full utility patent application in my own name with the USPTO. At 13, I started with an idea. At 14, I have 100 million reasons to keep going.

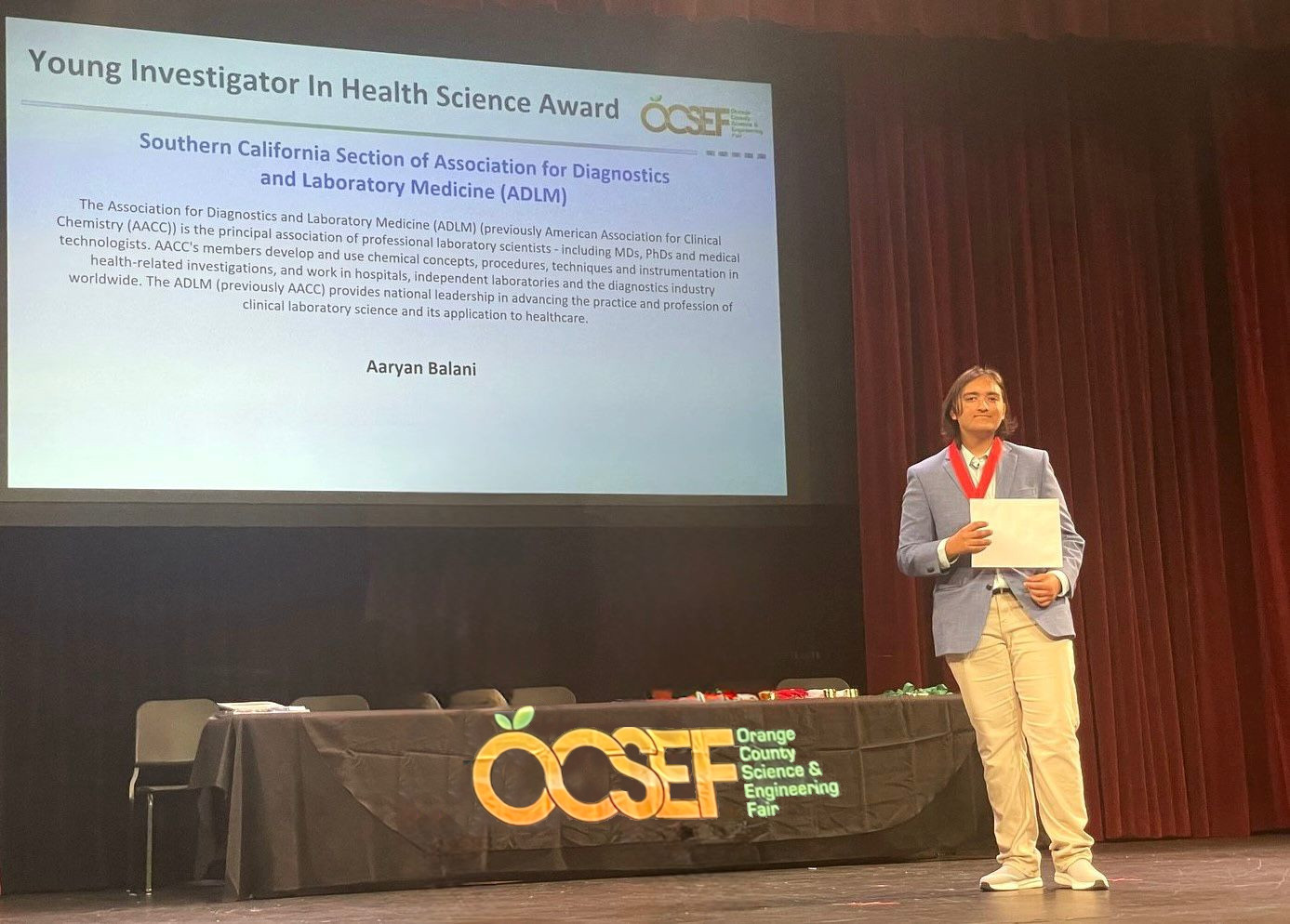

At the 71st Orange County Science & Engineering Fair at Chapman University in March 2026, EYEVA™ earned the highest award in the Biomedical Engineering category and the ADLM Young Investigator in Health Science Award — selected by professional medical scientists. I was also nominated to the Thermo Fisher Junior Innovators Challenge, the nation’s premier middle school STEM competition.

“At 13, I started with an idea. At 14, I have 100 million reasons to keep going.”

Recognition & IP

Who We’re Looking For

EYEVA™ is actively seeking partners to help bring this technology to patients at scale.

Research Partnership

Are you a faculty member, clinician, or research institution working in ophthalmology, pediatric vision science, biomedical engineering, or wearable AI?

EYEVA™ is seeking:

- University research mentors

- IRB-approved clinical study partners

- Ophthalmology and biomedical engineering lab collaborations

- Co-investigator partnerships for multi-subject clinical trials

Industry & Licensing

Are you a medical technology company, health innovation investor, or technology scout interested in AI-powered wearable medical devices?

EYEVA™ is open to discussions about:

- Licensing of patent-pending technology

- Strategic research and development partnerships

- Pilot program sponsorships

- Technology development collaborations

All IP is owned solely by Aaryan Balani. Any collaboration is welcome provided it is structured to protect existing intellectual property. Patent Pending · Trademark Pending

In the News

ABC7 Eyewitness News

AvailableLive TV segment - Circle Of Health

KTLA 5 Morning News

AvailableLive TV segment - Rich on Tech

Ajit Pai on X

AvailableFormer FCC Chairman shares the EYEVA story

Covering EYEVA™?

We have a full press kit with high-resolution photos, technical specifications, and background materials available on request.

Get In Touch

Whether you’re a researcher, industry partner, or member of the press, we’d love to hear from you. All inquiries are reviewed personally.